Understanding Attention Mechanisms – Part 4: Turning Similarity Scores into Attention Weights

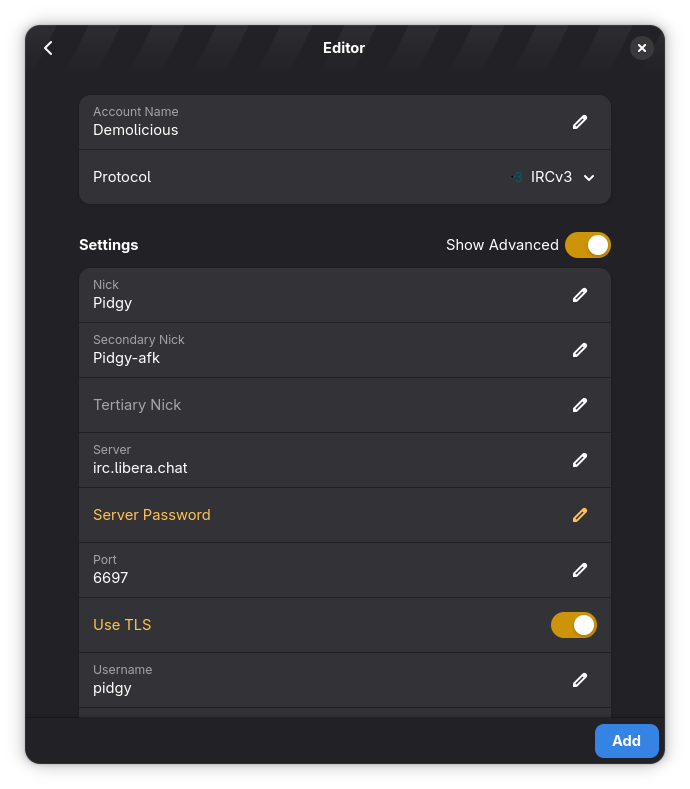

In the previous article, we just explored the benefits of using dot product instead of cosine similarity for attention. Let's dig in further and see how our diagram looks after using the dot produc...

Source: DEV Community

In the previous article, we just explored the benefits of using dot product instead of cosine similarity for attention. Let's dig in further and see how our diagram looks after using the dot product. We simply multiply each pair of output values and add them together: (-0.76 × 0.91) + (0.75 × 0.38) This gives us -0.41. Likewise, we can compute a similarity score using the dot product between the second input word “go” and the EOS token. This gives us 0.01. Now that we have the scores, let’s see how to use them. The score between “go” and EOS (0.01) is higher than the score between “let’s” and EOS (-0.41). Since the score for “go” is higher, we want the encoding for “go” to have more influence on the first word that comes out of the decoder. We can achieve this by passing the scores through the softmax function. The softmax function gives us values between 0 and 1, and they all add up to 1. So, we can think of the softmax function as a way to determine what percentage of each encoded in